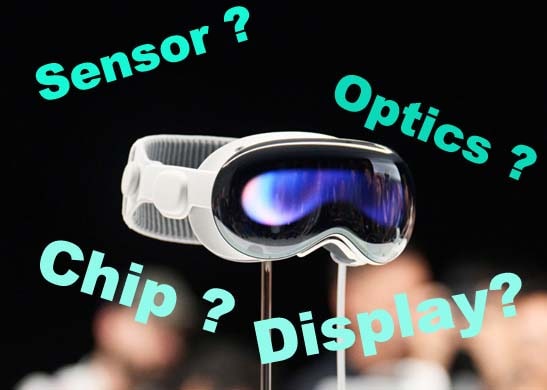

Apple’s Vision Pro is a revolutionary spatial computing device that seamlessly integrates digital content with the real world, allowing users to immerse themselves in the moment while still being able to communicate with others. The Vision Pro offers a borderless canvas that enables apps of all kinds to break through the constricting limitations of traditional displays. In addition, it introduces a fully three-dimensional user interface that can be operated using the most natural and intuitive input tools – the user's eyes, hands and voice. All of this is made possible by visionOS – the world’s first spatial operating system – and the Vision Pro’s innovative design, which incorporates an ultra-high-resolution display system with a mindboggling 23 million pixels spread across two displays and custom Apple silicon in a unique dual-chip design. The result is a device that enables users to enjoy a truly immersive experience, in which every moment feels as it is unfolding in front of one’s eyes, in real time.

The hardware configuration far exceeds that of mainstream products currently on the market, and empowers what Apple calls its "spatial computing" and "computable reality" capabilities. In terms of chips, the Vision Pro is equipped with Apple's self-developed M2 and R1 dual chips. In terms of optics, the device is equipped with two Sony 4K micro-OLED screens and 3P Pancake optical lenses. In addition, the device includes an impressive 12 cameras, five sensors and six microphones. All of this enables the Vision Pro to deliver:

- A ultra-high-definition human eye display: The Vision Pro’s two micro-OLEDs are capable of delivering an 8K ultra-high-resolution display. Each pixel is 7.5 microns wide, and the two panels have a combined total of 23 million pixels. According to LatePost, this means the Vision Pro offers 40 pixels per degree (PPD) – far more than the mainstream VR products on the market today.

- Hand, eye and voice interaction: Apple has been leading the market in human-computer interaction since the 1990s. The mouse and graphical interface it released in the 1990s remain the principal mode of operating computers. The release of the iPhone marked the beginning of the smartphone era. And, more recently, the AirPod saw physical buttons replaced by gestures and motions. The Vision Pro sees Apple continue to break new ground in this area, with an input system controlled solely by a person’s hands, eyes and voice – with no need at all for other external devices, such as joysticks.

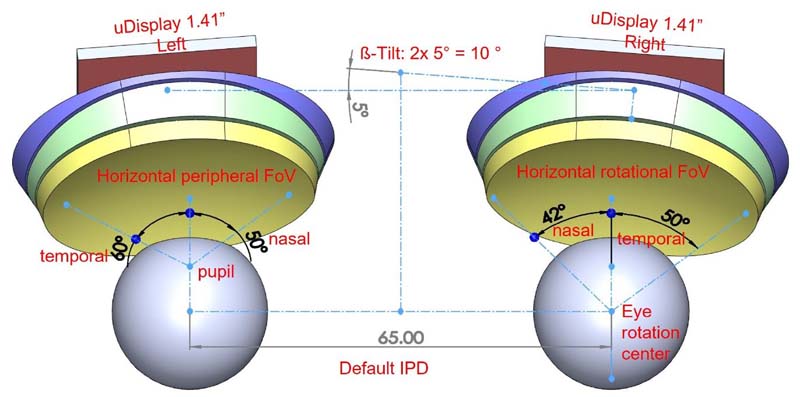

- Automatic pupil adjustment: The Vision Pro’s automatic pupil adjustment system enables the system to adapt to the distance between a user’s pupils. This, combined with the 120-degree field of view that the Vision Pro offers, enables it to solve the issues of dizziness and discomfort that can arise from wearing a VR device for too long.

- EyeSight: The Vision Pro has a another potentially game-changing feature, called EyeSight, which creates an artificial transparency on the headset’s outer display that allows for eye contact with those nearby and lets them know when you’re using apps or are fully immersed in an experience. In so doing, it bridges the gap between virtual and real-life scenarios.

- 3D photography, eye tracking and iris recognition: With its in-built radar and depth-of-field camera, the Vision Pro the first device Apple has released that enables users to capture 3D photos and spatial videos. In addition, the infrared camera and LED matrix built into the face of the device facilitate iris recognition and eye tracking.

Chip: The Vision Pro’s unique dual-chip design delivers outstanding performance

The Vision Pro’s unique dual-chip configuration, incorporating both a M2 and R1 chip, provides a solid base for spatial computing. The two chips fulfil different, but complementary, roles. The M2 chip is mainly used to provide powerful computing performance, and to control the temperature and noise of the device. The brand-new R1 chip, for its part, has been specially designed to handle real-time sensing data, and processes the input data collected from the device’s 12 cameras, five sensors and six microphones.

How does that translate to performance? Well, in short, the M2 provides superb computing power, and the R1 reduces latency in the device. The result is that content feels like it’s appearing in front of your eyes, in real time. Working together, the two chips are capable of processing, rendering and transferring new images to the device’s displays in less than 12 milliseconds, which is eight times faster than the blink of an eye. That’s significant as, according to Latepost, a latency higher than 20 milliseconds creates a feeling of vertigo. The Vision Pro manages to avoid that, with the speed at which it’s able to stream new images meaning it feels like content is appearing right in front of your eyes in real time.

How does the M2 chip compare the other mainstream AR/VR chip on the market today – Qualcomm's XR, which was built specifically for the AR/VR space? We’ve put both the M2 the Qualcomm XR through Geekbench5 (a cross-platform processor benchmark platform) testing to compare the CPU and GPU performance of the two. In terms of CPU performance, the M2 is significantly stronger than the Qualcomm XR in both single-core and multi-core testing. This is especially true in the multi-score testing, with the M2 achieving scores close to twice that of the XR, meaning it runs much smoother and faster. In terms of GPU scores, the M2 achieved considerably better results than the XR in both Manhattan 3.0 and 3.1 frame rate tests, meaning that it is capable of delivering higher frame rates and higher-definition graphics.

The more powerful M2 chip has already been launched, and the chip process is also expected to receive an upgrade. It is worth noting that Apple launched a new M2 Ultra chip at WWDC 2023. That is currently the highest specification chip in Apple's M series of processors. Made with TSMC's second-generation 5nm process, the M2 Ultra has a 24-core CPU that is 20% faster than the M1 Ultra, a 76-core GPU that is 30% faster than the M1 Ultra, and a display engine that supports up to six Pro Display XDRs – driving more than 100 million pixels. In addition, TSMC's 3nm process has also realized mass production. Both of these developments raise the prospect of future generations of the Vision Pro being equipped with even higher-specification chips, which will take computing and transmission performance to another level.

Sensors: The Vision Pro’s combination of five sensors empowers its spatial computing capabilities

As already mentioned, the Vision Pro is equipped with a total of 12 cameras, six microphones and five sensors. The cameras, for their part, are broken down into two main RGB cameras, four lower-side-view cameras, two outer-side-view cameras, and four eye-tracking infrared cameras (on the inside of the device). The sensors include one LiDAR sensor, two depth-of-field cameras, and two IR sensors. At the same time, there’s a ring of LEDs inside the device, which project invisible light patterns onto the user’s eyes for responsive, intuitive input. The combination of sensors, allied with the device’s powerful hardware configuration and software capabilities, provide infinite possibilities for applications and interactions. Ultimately, they enable the device to become a become a masterpiece of gesture recognition, 3D imaging, eye tracking, environment awareness, and iris recognition – among other things.

1. Multiple cameras for real-time image and motion capture from all angles

The Vision Pro’s Ultra HD camera, powerful computing power and VST technology enables it to realize the fusion of virtual and reality without boundaries. The Vision Pro's VST technology captures a real-time picture through the RGB main camera at the front of the device and its multiple sensors, rapidly superimposes this on the required virtual image, and transfers it to the display in front of the person's eyes – all in a matter of milliseconds. Due to the powerful processing power afforded by its dual-chip (M2+R1) design, the Vision Pro solves the problem of high latency – and associated sense of vertigo – that is a common feature VR devices.Similarly, the Vision Pro overcomes another issue common in VR devices – the non-adjustable nature of the OST program. By incorporating a knob on the top of the device that enables users to modify the degree of fusion between the virtual and the real worlds, its allows signals from the analog world to be presented in front of the user's eyes in a digitalized way, thus truly realizing the "adjustable reality".

The Vision Pro is also equipped with four lower-view cameras and two side-view cameras for more accurate head and hand tracking. Significantly, the incorporation of two infrared floodlights meaning this works even when the device is operated in dark environments. Traditional VR devices have struggled with bare hand tracking, and often require the use of additional equipment to work effectively. The Vision Pro, by contrast, does it with ease.

2. Radar and depth-of-field camera to realize 3D photography

The Vision Pro is the first device Apple has produced that is equipped with a 3D camera. Through the configuration of the radar and depth-of-field camera, the Vision Pro enables users to take 3D photos and spatial video with the click of a button on the upper- left-corner of the device. What this means is that users can record elements of their everyday life, in 3D, and watch it back as if it were happening right in front of their eyes. It also means that users can not only watch the 3D version of "Avatar: Waterway" at home, as if they were in a 100-inch theater screen, but also enjoy the truly immersive experience of watching basketball, soccer and other sporting events as if they were court- or pitch-side.Combining radar and depth-of-field cameras enables the device to more accurately measure depth information and convert 2D images into 3D images. Radar, through the dToF method, measures the distance to a target object by emitting a pulsed signal to the target and measuring the time it takes the released photons to return after bouncing back. Apple first utilized radar scanning in 2020, in the iPad Pro, and the iPhone 12 Pro and iPhone 12 Pro Max (also released that year) were similarly configured with a LiDAR scanner. In the Vision Pro, the depth-of-field camera emits invisible light spots through dot-matrix projection to produce an accurate depth map of the external environment, but the applicable distance is close. The radar makes up for this problem with a large and sparse radar spot that is suitable for scanning and modeling the overall environment. The combination of radar and a depth-of-field camera enables the device to perceive the nature of the outside environment more accurately. This, combined with Apple's own software algorithm advantage, makes 3D imaging more realistic.

3. The Vision Pro’s internal infrared camera and LED matrix enable it to realize effective eye tracking and deliver an Optic ID function

The Vision Pro’s incorporation of an infrared camera and LED matrix enable the device to track eye position and movement in real time, thus turning the user’s eyeball into a mouse. The device uses a high-speed camera and LEDs project an invisible light pattern onto the user's eyes to track movement. This enables users to launch and manage desired applications by eye.The eye-tracking capability of the Vision Pro also acts as the basis for a new secure authentication system – Optic ID. This new system analyzes a user's iris under invisible LED light and then compares that to registered Optic ID data protected by SecureEnclave to instantly unlock the device. The data is encrypted and stored on the device, ensuring the security of the user's data. In addition, Optic ID can also be used in other scenarios, such as to make payments through Apple Pay.

4. A realistic and immersive spatial audio system

The Vision Pro is equipped with Apple's most advanced spatial audio system, catering for a truly immersive experience. The Vision Pro's speakers are located between the glasses and the headset part, using dual units, and do not enter the ear. Its spatial audio system, operated through directional sound transmission technology, can send sound from all sides – providing the user with a more immersive feeling. At the same time, the Vision Pro analyzes the acoustic characteristics of the external environment, providing users with audio effects that mirror what is going on around them, with such sounds experienced as if coming through a window.The Vision Pro combines acoustic measurement and dynamic tracking to deliver a great audio experience. According to MyAudio.com, the Vision Pro not only comes with acoustic metering technology from the HomePod, but also has built-in spatial audio and head tracking technology from the AirPods. Acoustic metering utilizes a microphone to detect sound reflections, which are used to sense the device's position in the space it's in and to use this as the basis for auto-tuning. At the same time, the Vision Pro realizes the tracking of head dynamics through precise positioning, bringing a more immersive audio experience.

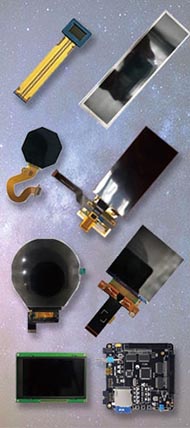

Optics: Pancake optics plus micro-OLED represents a top-of-the-line solution

1. Layer surface pancake provides high clarity and transparency

Apple’s Vision Pro features 3P Pancake optics, which constitutes the top of the line for current VR devices. 3P Pancake optics provide extreme clarity and transparency, rendering and playing back video in 4K clarity with a wide color gamut and high dynamic range. The solution makes text clear and readable from all angles, improving the user's experience of looking at web pages and reading information. It also enables the Vsiion Pro to offer a 120-degree field of view, which is much wider than that offered by the latest flagship products from other mainstream manufacturers.The folding-light-path solution offered by Pancake optics technology utilizes the principle of polarized light, including two sets of lenses. After the image on the display enters the half-reflective, half-transparent beam splitter, the light rays are folded back several times through the lenses, phase retarder, and reflective polarizing film, and finally enter the human eye through the polarizing reflector.

At present, the vast majority of manufacturers using Pancake optics technology use a two-piece foldback program, which incorporates a smaller number of lenses, comes at relatively low cost and can be produced through a simple mass production process. The three-piece 3P Pancake optics used in the Vision Pro reduces the distortion and dark-angle problems at the edges of traditional Fresnel lenses through lens combinations, with higher imaging quality and improved field-of-view size. Although incorporating the Pancake route still presents designers with a series of problems (chiefly relating to complex optical design, lower light efficiency, susceptibility to artifacts, smaller FOV for mass production solutions, and higher cost), there is still room for continued improvement on the Pancake technology front. For instance, Pancake technology can, in theory, deliver up to a 200-degree field of view, while there’s no upper limit in terms of panel resolution. In other words, the technology has the capacity, with sufficient imagination and ingenuity, to deliver even better results – while the high productions will almost certainly fall.

2. Micro-OLED: Monocular 4K resolution, PPD far ahead of the rest

The Vision Pro’s two micro-OLED screens ensure that text is clear and sharp from whatever angle you look at it. Measuring in at the size of a postage stamp each, the panels contain a staggering 23 million pixels (with each pixel being just 7.5 microns wide) and combine to deliver monocular 4K resolution. According to Latepost, the Vision Pro offers 40 pixels per degree (PPD). By comparison, Meta’s Quest Pro and the Pico4 have PPDs of 17 and 20.6, respectively. This is significant, as the closer one gets to 60 PPD (which constitutes the human eye’s upper limit), the clearer the display image is perceived by the user.Silicon-based OLEDs deliver outstanding performance and offer an improved user experience thanks to their thin and lightweight nature, self-illumination and fast response times. According to TOPWAY and OLED industry data, the self-luminous characteristics of silicon-based OLED means there’s no need for a backlight – allowing for much thinner and lighter displays that use 30-40% less power than LCD. Silicon-based OLEDs, like those used in the Vision Pro, are one-tenth of the volume of those traditionally used in VR devices, which effectively leads to increased pixel density while reducing the weight by more than 50%. Such displays also have nanosecond response speed – much faster than the millisecond response speeds of LCD and even the microsecond response speeds of traditional OLED, which enhances the interactive immersion of MR equipment and improves the experience of using it.

3. EyeSight enables users to stay connected with the people around them

In addition to the two micro-OLED displays inside, the Vision Pro utilizes a curved OLED screen on the outside of the device to deliver a two-way perspective effect. The exterior-facing display reproduces the user’s eye image, captured by the interior-facing sensors, to create a see-through effect. When someone approaches a user wearing the Vision Pro, the viewing area of the device becomes transparent, allowing the user to see the approaching person, while the approaching person can also see the user’s eyes when the user is focused on using the device. EyeSight also lets people in the outside world know when you can’t see them.4. Automatic pupil distance adjustment dramatically reduces, or even eliminates, vertigo

The Vision Pro is equipped with two independent sets of pupil distance adjustment devices, which can significantly reduce or even eliminate vertigo. People’s left and right eyes see a different picture, which is processed by the brain to form a complete 3D picture. However, the distance between one’s pupils varies from one person to the next. Thus, when a user dons VR equipment that is not able to adjust to the specific distance between their pupils, phenomena such as “ghosting” will occur and a feeling of dizziness and nausea will arise as a result. The Vision Pro can captures the position of the eyeballs in real time through its innovative eye-tracking system, and adjusts to the user’s pupil distance accordingly. This helps to significantly reduce or even eliminate the feeling of vertigo due to the conflict of convergence adjustment.Motorized stepless pupil adjustment has become the basic configuration of high-end MR/VR devices. Existing pupil distance adjustment can be divided into independent monocular IPD adjustment and binocular integrated IPD adjustment, of which independent monocular IPD adjustment requires two sets of micro traditional devices. According to a report published on Yingwei.com, the automatic pupil distance adjustment system that the Vision Pro uses will be faster and more seamless than Meta’s Quest Pro, without the need for a manual dial or setup through a slider, which makes it easier for the user to get started and improves the overall user experience.

5. Pancake light square leaves room for diopter adjustment

The Vision Pro currently utilizes a magnetic lens interface for myopic users, but refractive adjustment is expected to be imported in the future. The Vision Pro is currently optimized for myopic users, with a magnetic lens interface located at eye position within the headset. At present, the device does not support the use of glasses at the same time. The solution, for those who need to wear glasses, is to purchase specialist prescription lenses. Apple has partnered with Zeiss to produce these vision-correction lenses, but they’re likely to be expensive: Bloomberg have recently reported that they’ll be cost between $300 and $600 (USD). However, the Pancake optics technology that the Vision Pro utilizes offers a potential solution, enabling refractive adjustment of one (or both) lenses to improve the improve the VR experience of myopic users.Other Hardware

The Vision Pro’s modularized design makes the whole machine look more aesthetically pleasing. In addition to the hardware configuration mentioned above, the Vision Pro is also equipped with the following structural and functional pieces:- On the front of the headset is a three-dimensionally constructed laminated glass with an optically polished surface. This can be used not only for EyeSight's lenses, but also for a range of environmentally aware cameras and sensors.

- Two physical buttons on the outer frame – one for taking photos and videos, and the other for calling up the main page and adjusting immersion.

- An outer frame made of a specific aluminum alloy that allows the product to be shaped as a whole. Constituting the device’s main structure, this frame is not only pleasing on the eye, but also effectively houses and protects the various components inside.

- Lightweight eyecups that are available in different shapes and sizes to fit the user's face. The device also has flexible side straps extending from the frame that ensure it fits snugly around the head and keeps the audio components close to the ears.

- A 3D woven headband that is breathable, ductile, and can be rested on a pillow. The connection is simple and reliable, allowing users to change sizes and styles, and the rotating button adjusts the elasticity of the headband for a more comfortable user experience.

- The device can be used 24/7 when plugged in, and the external high-performance battery can be used for up to 2 hours.

Having been in the pipeline for more than five years, the forthcoming release of Apple's first-generation Vision Pro is likely to benefit the wider supply chain, accelerating stock preparation, the production of related components and production/testing equipment, and bringing new business to machine-assembly companies.