If you watched a humanoid robot demo two years ago, the "face" was usually an afterthought. A flat LCD bolted onto a plastic head. Two pixel-art eyes that blinked on cue. It looked fine in a YouTube clip, but nobody was pretending it was finished design.

That window is closing fast.

In late March 2026, AgiBot — the Shanghai company you might know as Zhiyuan Robotics — rolled its 10,000th general-purpose humanoid off the production line. The first thousand units took them roughly two years. The jump from 5,000 to 10,000 took a single quarter. Unitree is scaling on a similar curve, and TrendForce is now projecting global humanoid output to grow 94% in 2026, with these two Chinese players alone accounting for nearly 80% of shipments.

Translation: humanoids are leaving the lab. They're showing up on tablet assembly lines, in hotel lobbies, in retail showrooms, in hospital corridors. And the second a robot has to share space with a human customer or a human coworker, the face stops being decoration. It becomes the interface.

That's where flexible OLED comes in.

The face is the UX

Look at the photo at the top of this title — that's an AgiBot unit, with the cluster of robots behind it that you've probably seen circulating on Chinese tech Twitter. Notice what your eye goes to first. Not the arms. Not the chassis. The face. Specifically, the eyes.

There's a reason for that. Humans are wired to read intent off other faces in milliseconds. When a humanoid robot is going to hand you a coffee, walk past you in a warehouse aisle, or pick up a fragile component next to your hand, you want to know — instantly — what it's about to do. A static plastic shell can't tell you that. A bright, responsive display can.

The design problem is that a robot head is not a phone. It's curved. It's compact. It vibrates. It runs in environments that swing from cold loading docks to hot factory floors. Whatever you put behind that "face" has to bend to the industrial design, survive the duty cycle, and still look alive at a glance.

That's a flexible OLED display problem, not an LCD problem.

Why flexible OLED, specifically

A few things stack up in OLED's favor for this use case, and they all matter at the same time:

True blacks. OLED pixels emit their own light, so when a pixel is off, it is genuinely off. On a robot face, that means the "whites" of the eye glow against a perfectly black sclera. No backlight bleed, no grey halo around the iris. From two meters away the eye reads as an eye, not as a screen pretending to be one.

Curved and conformable. A flexible OLED panel can be bent to a fixed radius during assembly, which lets industrial designers wrap the display around the contour of the head instead of leaving an awkward flat rectangle on the front. This is the difference between a robot face that looks designed and one that looks 3D-printed in someone's garage.

Wide operating temperature. Robots don't live in 22°C offices. They live in warehouses in Chongqing in August and cold rooms in northern Europe in January. Industrial-grade flexible OLED panels are spec'd from −30°C or even −40°C up to +70°C / +80°C, which is the realistic envelope for an embodied AI deployment.

Low power, fast response. No backlight means lower draw, which matters when the head shares a battery budget with two arms, two legs, and a stack of inference accelerators. Sub-2ms pixel response means the moment the model decides the robot should look surprised, the face is already showing it.

High PPI at close range. People stand close to robots. A 400+ PPI panel holds up under that scrutiny in a way a 200 PPI industrial LCD simply does not.

So far, so good in theory. The interesting question is: which panels actually fit a humanoid head?

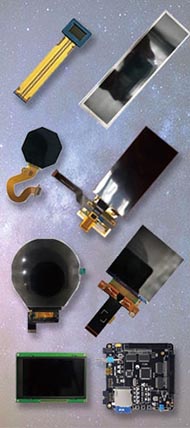

Two flexible OLED panels worth looking at

At Panox Display we spend a lot of time matching panels to weird form factors — diving computers, AR glasses, automotive clusters, and now, increasingly, robot heads. Two of our flexible OLED SKUs keep coming up in conversations with embodied AI teams. I'll be honest about why.

1. The 6.52" 2520×840 long-strip flexible OLED — for stretched, wrap-around faces

6.52 inch Flexible OLED, 2520×840, In-cell Touch

This one is interesting because of its aspect ratio. 3:1. It's a long strip — 157mm tall, 52mm wide active area, 407 PPI, 90Hz refresh, 10000:1 contrast, in-cell touch already integrated. The panel was originally aimed at smartphones and AR/MR vehicle dashboards, but the proportions are almost suspiciously well-suited to a humanoid head where you want a single continuous "visor" running across the eyeline.

Why it works on a robot:

- A long strip means you can render two eyes plus a status indicator (charging, listening, error, attention direction) on a single seamless panel — no bezel between the eyes, which is what kills the illusion on most current builds.

- 90Hz refresh keeps gaze tracking and saccade animations smooth. If your robot's eyes lag the head movement by even 50ms, humans notice and find it uncanny.

- The flexible substrate lets you bend the panel to follow the curve of the brow, instead of mounting it dead flat behind a plastic window.

- Operating temperature −30 to +80°C is realistic for indoor industrial and most outdoor commercial deployments.

- Sunlight readable at 430 nits, which matters for robots doing showroom or outdoor reception work.

If you've been shopping for a "visor-style" humanoid face panel and getting quoted on awkwardly cut full-size phone displays with 30% of the pixels disabled, this is the panel to ask us about.

2. The 6.67" 2400×1080 flexible AMOLED — for full-face expressive displays

6.67 inch Flexible AMOLED, 1080×2400, 2K, In-cell PCAP

This is the other direction. Instead of a strip, you get a full smartphone-class panel — 6.67", FHD+ 2K, 394 PPI, 700 nits typical brightness, 80,000:1 contrast, 101% NTSC color, in-cell PCAP touch, and a wide −40 to +70°C operating range. The panel is built on Tianma's flexible OLED line and ships with the NT37705 driver IC, so the integration story is mature.

Why it works on a robot:

- Full-face expressive displays — the kind where the entire front of the head is the screen, and the "face" is rendered software — need real estate, real color depth, and real brightness. This panel delivers all three.

- 700 nits typical and "readable under sun" treatment means the face stays legible in retail, hospitality, and exhibition environments where you can't control the lighting.

- 80,000:1 contrast is genuinely OLED-class. When the model wants to display a subtle blink or a sideways glance, the gradient holds up. On a backlit LCD it would muddy out.

- −40°C cold-weather rating is rare and useful — if your customer is deploying in Northern Europe, Korea, or Hokkaido, this panel survives the loading dock.

- Mature driver IC and HDMI/Type-C controller board availability means your engineers can be running test patterns the day the sample arrives, not three weeks later.

This is the panel for teams building the next generation of expressive humanoids — the ones where the entire face is one continuous OLED canvas, and the brand is built on how alive the robot looks.

What we're seeing in the market

A few patterns from the inquiries we've received over the last six months:

- Most teams underestimate operating temperature requirements. They spec a consumer-grade smartphone panel, then realize it doesn't survive cold storage facilities or outdoor deployments. Industrial-grade flexible OLED with −30°C or −40°C ratings is non-negotiable for serious embodied AI work.

- Teams overestimate how much custom tooling they need. Both panels above ship with established driver ICs, in-cell touch, and reference boards. You don't need to spin a custom display from zero — you need to pick the right off-the-shelf flexible OLED and integrate it cleanly.

- The "eyes vs. full face" question is a product decision, not a technical one. Some brands want a friendly, anthropomorphic face (full panel, 6.67" 2K). Others want a sleeker, more industrial visor look (long-strip 6.52"). Both work; pick the one that matches your brand.

- Touch matters more than people think. Even if the robot's primary input is voice and vision, having an in-cell touch panel on the face means a human can tap it to wake the robot, dismiss a prompt, or confirm a transaction. Both of these panels include integrated touch.

Where to go from here

If you're building a humanoid robot in 2026 and the face panel is still on your "we'll figure it out later" list, it's later. The reference players are scaling, the design language is being set right now, and the gap between robots that look alive and robots that look like rolling tablets is becoming visible to end customers.

We have both of these flexible OLED panels in stock at Panox Display, with datasheets, sample units, HDMI/Type-C controller boards, and free connectors for development. If you want to talk through which one fits your head design — or you need a customized cover glass, custom touch panel, or a bend radius spec we haven't published — get in touch. We've supported display integrations for everyone from indie hardware teams to industrial OEMs over the last seven years, and humanoid robotics is the application we're most excited about right now.

Take a look at the panels:

- 6.52" Flexible OLED 2520×840 (long-strip, visor-style)

- 6.67" Flexible AMOLED 2K (full-face expressive)

Or just send us your robot's head CAD and we'll tell you which one fits.